I hiked into Horseshoe Canyon with my friends Bill, Peter, and Kurt on 15 April 2023. We aimed to see the Barrier Canyon-style pictographs on the canyon walls. We arrived at the remote Horseshoe Canyon unit of Canyonlands National Park the night before. We were able to camp very near the trailhead.

We met Ranger Jared at the trailhead for a guided walk. Bill had previously called and found there were ranger-guided tours; this was an excellent idea.

One of the first things Jared pointed out was a dinosaur track.

We descended about 150 meters (500 feet) into Horseshoe Canyon during the cool morning. The trail was well maintained; this is a moderate hike. One caution is that an early start would be wise in the summer months as hiking back up in the afternoon sun would be some hot work.

We saw four separate pictograph sites. First up was the High Panel, tucked into a small area among trees, a bit off the main trail. These pictographs were high off the ground. A short walk across the canyon, perhaps ten minutes, led to the Horseshoe Panel of pictographs was intriguing. I wondered what the artist meant to communicate with the trapezoidal figures in the panel. I had seen petroglyphs before, but this trip was my first exposure to pictographs; there was a lot to ponder.

After some time at Horseshoe Panel, we headed to the Alcove Panel.

We noticed the acoustics were interesting; we wondered what ceremonies were associated with the pictographs. Some epic tales, the equal of the Iliad in the Western tradition, might have been told at these sites.

Next, our group trekked about a mile to the Great Gallery.

The approach opened up to the initial view of the Great Gallery.

The pictographs spread over a 20-meter (60-foot) expanse of rock tucked under the cliff; this was the most complex set I’d seen. There was a repeated motif of trapezoidal figures. In this gallery, some of the darker figures had lighter shadows next to them, perhaps like the soul of the darker figures. The panel had more miniature figures; I considered them humans among the gods.

The mysterious “Holy Ghost” section left me wondering what it might mean.

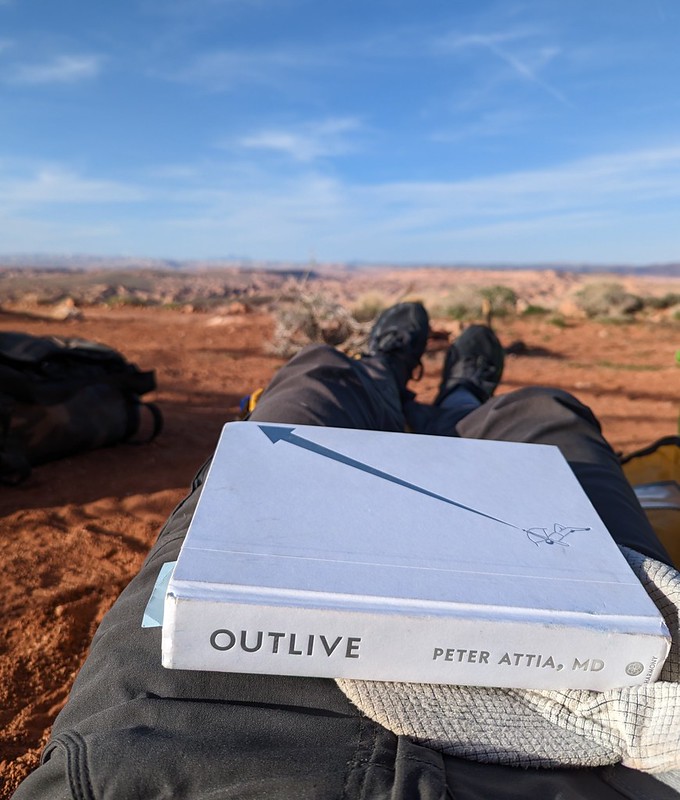

We had lunch on some benches that let us gaze at the Great Gallery and contemplate this great art. Afterward, we wandered up the canyon toward our camp.

Along the way, I spotted a butterfly near Barrier Creek. I spent a good five minutes chasing it down.

It was a Mourning Cloak butterfly, a common species I had seen in my home state of Washington. When I posted this observation on iNaturalist, I found that Nymphalis antiopa has a wide range across the Northern Hemisphere. Whether common or rare, it was still worth chasing a butterfly.

While taking a break before ascending to camp, I talked to a couple who are volunteers at the park. They had seen an unusual wildflower, a paintbrush species, on the opposite rim of the canyon. They gave me directions, and I was off on a botanical boondoggle. The hiking up to the opposite rim was more challenging.

I made it to the top and found the flower, a Rough Paintbrush ( Castilleja scabrida).

I decided to head along the trail on this side of the canyon for about 20 minutes; the payoff was a great view of Sugarloaf Butte with the Henry Mountains in the far distance.

We had brought radios, and I could contact my friends at our camp on the opposite rim. As I headed down, I had a great view of Horseshoe Canyon. After an hour, I returned to camp and settled in for dinner. It was a fine day of hiking. As a bonus, we had some excellent star gazing in the evening.